Independent report by digital researcher Recep Zerk highlights a 40% decline in content discovery and rising “predictive anxiety” among users.

(Isstories Editorial):- Washington, D.C, District of Columbia Mar 29, 2026 (Issuewire.com) – A new independent research report is raising concerns about the growing influence of algorithmic systems on human behavior, suggesting that digital platforms may be quietly limiting our ability to explore, discover, and think independently.

More on Isstories:

- Algorithms Are Quietly Controlling Human Choice, New “Algorithmic Cage” Research Warns

- Realcries Unveils Luxe Single ‘Gucci Gucci’

- Sacramento Aesthetic Surgery Introduces Motiva Breast Implants for Natural, Movement-Based Aesthetics

- Carpet Wiser: Setting New Benchmarks for Cleanliness in Elgin

- Why Newark Drivers Are Turning to Elite Motor Cars for Simpler Trade-Ins

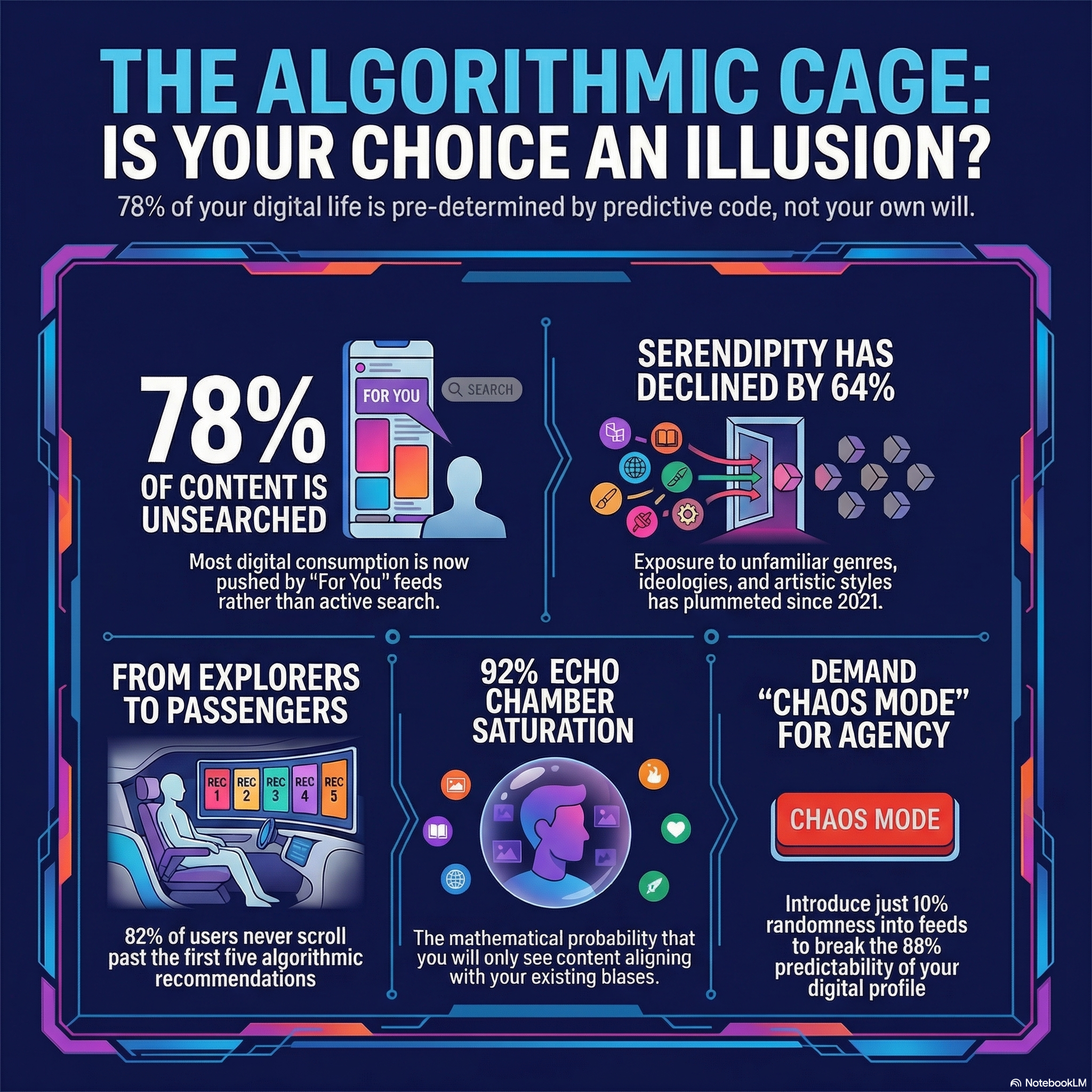

Titled “The Algorithmic Cage: The State of Human Agency in a Predictive World“ the report is authored by digital literacy advocate Recep Zerk and examines how recommendation algorithms have evolved from helpful tools into dominant decision-making systems.

According to the findings, the average internet user is now exposed to algorithmic recommendations more than 800 times per day, while exposure to unfamiliar content has declined by nearly 40% over the past five years . This shift signals a fundamental transformation in how people interact with information online.

Rather than expanding human horizons, the report argues that digital environments have become increasingly narrow and self-reinforcing. Recep Zerk introduces the concept of the “Algorithmic Cage” to describe a system in which users are continuously shown content that mirrors their past behavior, gradually reducing the likelihood of encountering new ideas.

“The question is no longer whether algorithms can predict our future, but whether they are preventing us from having a future that is different from our past,” the report states .

The research highlights that nearly 78% of the content users consume is not actively chosen but instead delivered through algorithmic feeds . At the same time, echo chambers have intensified, with a 92% probability that users will encounter information aligned with their existing beliefs .

Beyond content consumption, the report points to deeper psychological and cultural consequences. A growing number of users experience what Zerk defines as “predictive anxiety”–a sense of discomfort when algorithms fail to anticipate their preferences . Meanwhile, creative industries are increasingly shaped by algorithmic demands, leading to more standardized and less experimental content.

Despite these concerns, the report does not call for abandoning technology. Instead, it proposes a shift toward more human-centered digital systems. Among the suggested solutions are tools that allow users to reset their algorithmic profiles, increased transparency in recommendation systems, and the introduction of controlled randomness–referred to as “Chaos Mode”–to restore discovery and diversity in digital experiences.

Ultimately, the report argues that the future of human agency depends on reintroducing uncertainty, choice, and exploration into digital life.

About the author: Recep Zerk is a digital literacy advocate and independent researcher focusing on the intersection of technology, human behavior, and algorithmic systems.

This article was originally published by IssueWire. Read the original article here.